As a DevOps and SRE engineer, I have spent a lot of time building automated, reliable pipelines and cloud platforms. Over recent years, I have been applying the same principles to machine learning and AI projects.

One of those projects is CarHunch, a vehicle insights platform built on MOT data at scale. Building it made one thing clear: DevOps practices map directly onto MLOps - versioning datasets and models, tracking experiments, and automating deployment workflows.

To make that bridge easy to explore, this post presents a minimal, reproducible demo using MiniLM and MLflow.

Source code: github.com/DonaldSimpson/mlops_minilm_demo

The quick way: make run

The quickest path is the included Makefile. If you have Docker installed, run:

# clone the repo

git clone https://github.com/DonaldSimpson/mlops_minilm_demo.git

cd mlops_minilm_demo

# build and run everything (training + MLflow UI)

make runThat single command will:

- Spin up a containerised environment

- Run the training script (MiniLM embeddings + Logistic Regression)

- Start the MLflow tracking server and UI

make run.Once it is up, open http://localhost:5001 to explore logged experiments.

What the demo shows

- MiniLM embeddings convert short MOT-style notes into vectors

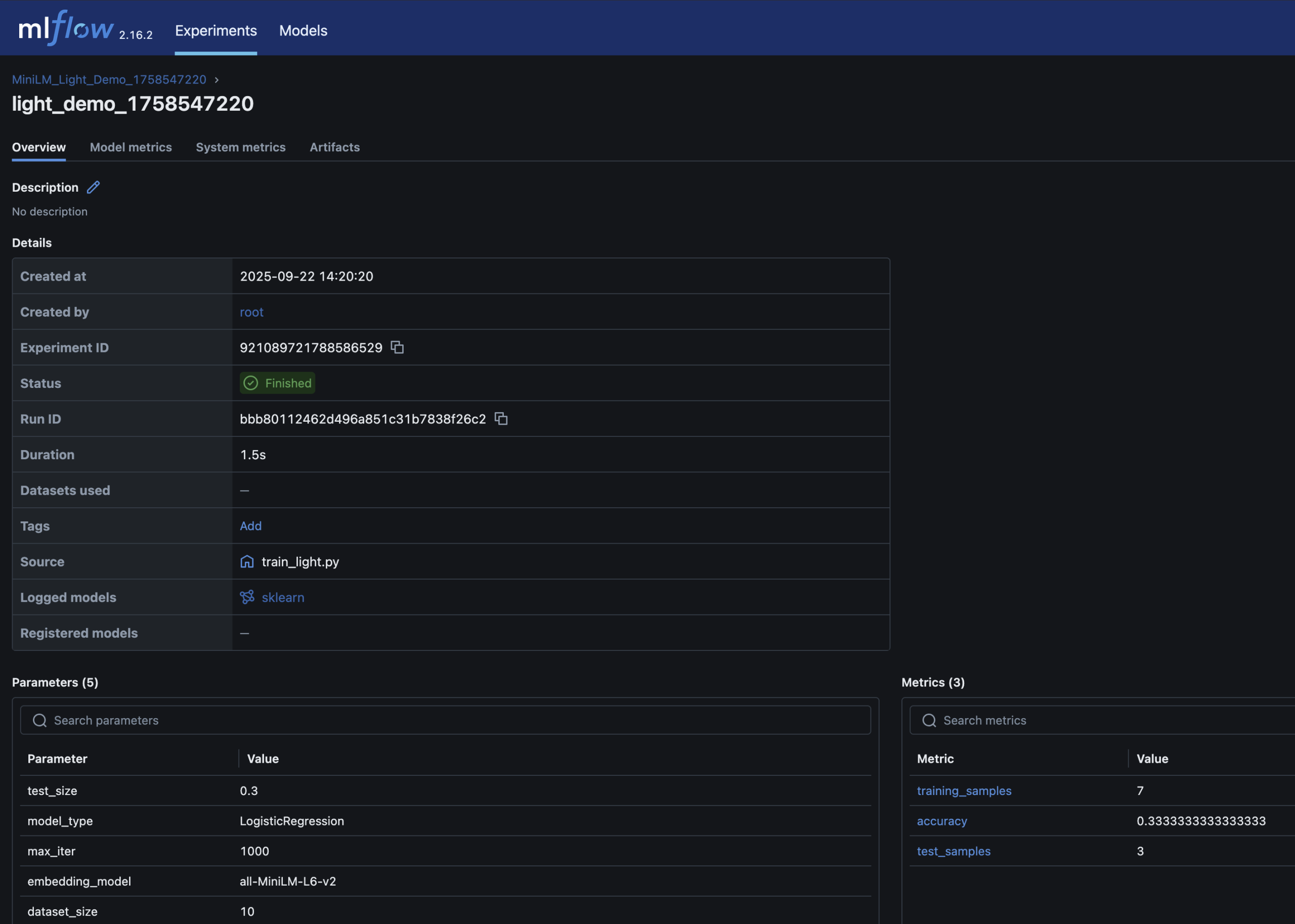

- A Logistic Regression classifier predicts pass/fail

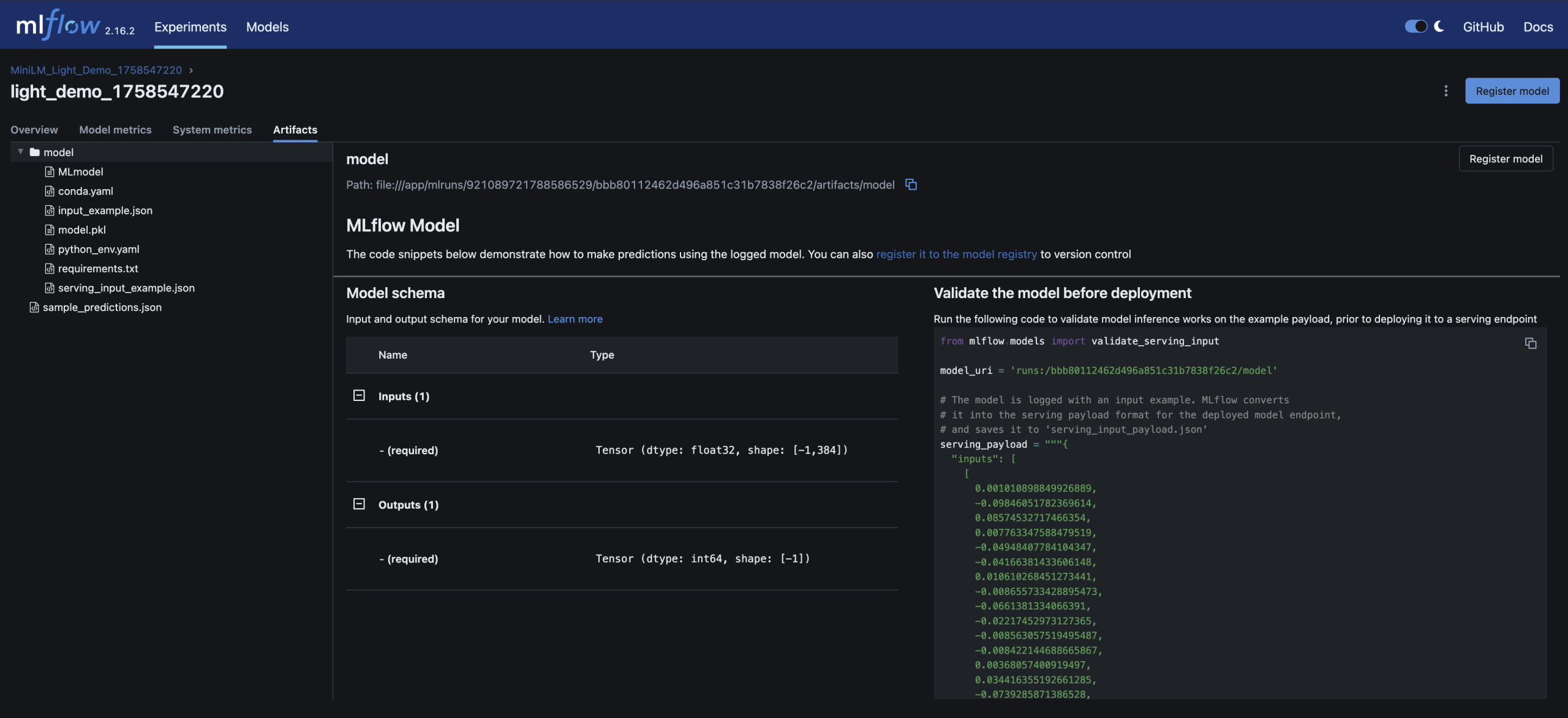

- Parameters, metrics (accuracy), and the trained model are logged in MLflow

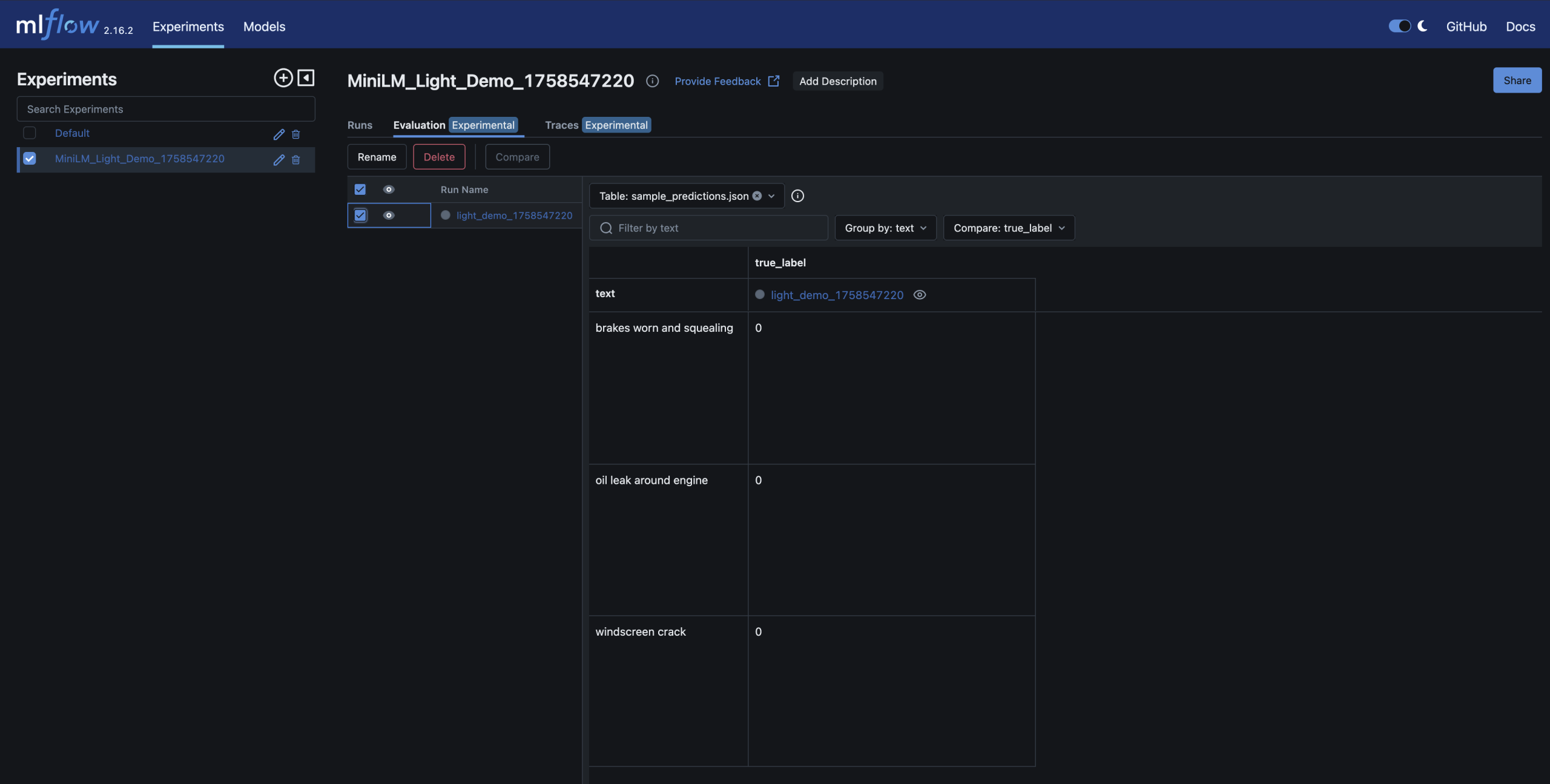

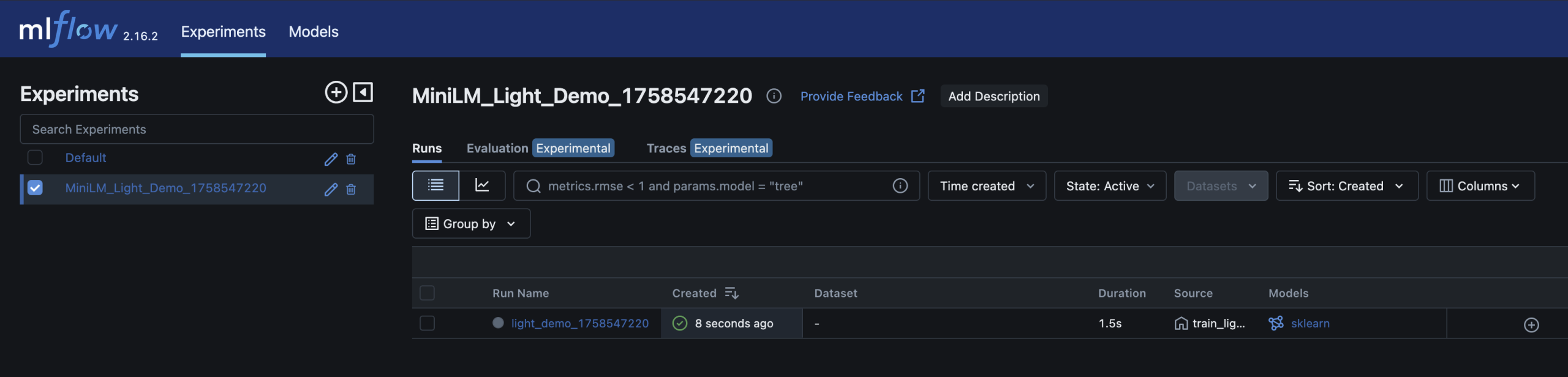

- Runs can be inspected and compared in the MLflow UI, similar to CI artifacts

- Run detail, metrics, and model artifact stay together for traceability

Relevant MLflow UI screenshots:

Why this matters for DevOps engineers

- Familiar workflow: MLflow feels similar to CI systems for models

- Quality gates: models can be promoted only when metrics pass thresholds

- Reproducibility: datasets, parameters, and artifacts are tied to runs

- Scalability: this pattern scales from demo to production workflows

Other ways to run it

If you prefer a different execution path, the repo also supports:

- Python virtualenv: install

requirements.txtand runtrain_light.py - Docker Compose:

docker-compose up --build - Make targets:

make train_light(quick) andmake train(full)

Next steps

Once comfortable with this demo, natural extensions are:

- Swap in real datasets such as DVLA MOT data

- Add data validation gates (for example Great Expectations)

- Introduce fairness checks with tools such as Fairlearn

- Run in Kubernetes (KinD/Argo) for reproducible orchestration

- Integrate GitHub Actions for end-to-end CI/CD

Closing thoughts

DevOps and MLOps share the same DNA: versioning, automation, observability, and reproducibility. This demo repo is a practical bridge between the two.

Working on CarHunch provided the real-world context; this demo distills that into something any DevOps engineer can run locally.

Try it out: github.com/DonaldSimpson/mlops_minilm_demo